This guide is different. It gives you the technical depth to make that call on your own terms, including the specific dimensions where Apache Artemis has genuine advantages, the areas where Apache ActiveMQ definitively holds its ground, and an honest accounting of what migration actually costs. It also names something most comparison guides won’t: the vendor dynamics shaping how this conversation is framed in the industry.

Why Apache ActiveMQ and Apache Artemis Are Fundamentally Different Systems

Most confusion about Apache ActiveMQ vs Apache Artemis starts with a false premise: that Apache Artemis is a refactored or upgraded version of Apache ActiveMQ. It is not.

In 2026, the Apache Software Foundation formalized this distinction by splitting the two projects. Apache Artemis became a top-level project (TLP) within Apache, independent of the original Apache ActiveMQ project. They are now simply called Apache ActiveMQ and Apache Artemis.

On July 8, 2014, the HornetQ codebase, the Red Hat-developed, JBoss-backed message broker, was donated to the Apache Software Foundation and contributed to the ActiveMQ project as the next-generation broker.

That origin story matters more than most comparisons acknowledge. HornetQ was Red Hat’s product. When it was contributed to Apache under the Apache Artemis name, Red Hat’s engineering investment followed.

HornetQ was already a mature, production-proven system with a radically different internal architecture than Apache ActiveMQ: built from the ground up as a fully asynchronous, non-blocking system, with an append-only journal for persistence and a protocol-neutral internal model.

The Apache community has spent the years since adding JMS/OpenWire compatibility layers, broadening protocol support, and closing the feature gap with Apache ActiveMQ.

But “architected differently” does not mean “architected better” for every workload. Understanding that distinction, rather than accepting the migration-first consensus, is the foundation for every practical comparison that follows.

Architecture Deep-Dive: Six Dimensions That Decide the Choice

1. I/O Layer: Blocking TCP vs. Netty Non-Blocking

Apache ActiveMQ gives you a choice: tcp (synchronous, one thread per connection) or nio (non-blocking, Mina-based). In practice, many teams chose based on workload intuition rather than measurement, and the difference was not always obvious at moderate scale.

Apache Artemis uses Netty exclusively for all transport-layer I/O, non-blocking by default, with no configuration choice required.

The practical consequence is felt at connection density. Apache ActiveMQ’s blocking TCP transport creates a thread per connection. At 500+ concurrent client connections, thread stack memory and context-switching overhead become measurable. Apache Artemis’s Netty reactor model handles thousands of concurrent connections on a small, bounded thread pool.

For microservices environments with many short-lived producer connections, this architectural difference is real. For environments with stable, moderate connection counts, like many enterprise integration deployments, Apache ActiveMQ’s threading model is well-understood and operationally mature, with decades of production tuning behind it.

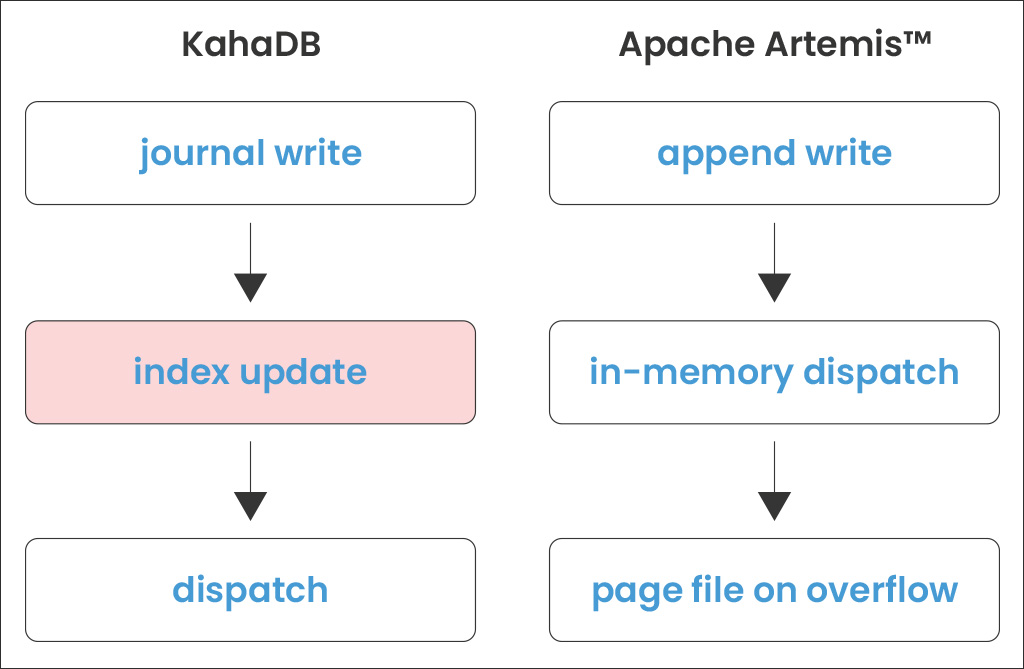

2. Persistence: KahaDB vs. the Apache Artemis Append-Only Journal

Apache ActiveMQ uses KahaDB as its default persistence layer, a message journal for fast sequential writes paired with a message index for retrieval by destination and message ID.

The index is the operational weight in this model. Every enqueue and dequeue requires an index update. Under high write pressure, this becomes contention. After an unclean broker shutdown, index recovery is the primary cause of slow restart times and, occasionally, store corruption requiring manual KahaDB repair.

Apache Artemis uses an append-only message journal with no message index. The journal is kept in memory, and messages are dispatched directly from it.

The tradeoff is significant: the in-memory journal model works best when messages move through the broker and do not accumulate. When Apache Artemis cannot hold all incoming messages in memory, it pages them to disk, a paging model that differs fundamentally from KahaDB’s cursor approach.

Understanding whether your broker is operating in-journal or in-paging mode is the single most important diagnostic question for Apache Artemis performance troubleshooting, and it’s one more unfamiliar operational surface compared to Apache ActiveMQ’s well-documented behavior.

Both brokers also support JDBC persistence. The Apache Artemis documentation is explicit: JDBC carries a performance cost relative to the file journal. Use the file journal for production unless a relational database is a hard architectural requirement.

3. Messaging Model: JMS Destinations vs. the Address/Queue/Routing-Type Model

This is the most conceptually significant difference in the Apache Artemis vs Apache ActiveMQ comparison, and the one that most commonly surprises teams mid-migration.

Apache ActiveMQ was built as a JMS implementation first. Queues and topics are first-class citizens at the core of the broker. Every other protocol AMQP, MQTT, STOMP is translated internally into OpenWire and routed through the JMS destination model.

This protocol translation is invisible to most users, but carries a semantic cost: AMQP properties without an OpenWire equivalent are silently dropped or mapped to the nearest available concept.

Apache Artemis implements only queues internally, with all messaging patterns achieved through addresses, queues, and routing types:

- Anycast routing maps a message to a single queue, implementing point-to-point semantics.

- Multicast routing copies a message into a queue for each subscriber, implementing publish/subscribe semantics.

- An address can be configured for anycast, multicast, or both simultaneously.

For teams running polyglot environments, AMQP producers feeding JMS consumers, or MQTT IoT devices writing to queues consumed by Java services, the Apache Artemis model offers genuine flexibility. For teams using JMS exclusively, this difference is largely invisible: the OpenWire compatibility layer handles the mapping transparently.

The important caveat for migration: this model change is not cosmetic. Teams that have built deep operational runbooks, monitoring queries, and routing logic around Apache ActiveMQ’s queue/topic model will find this a meaningful mental model shift, not just a configuration update.

4. Protocol Stack: OpenWire-Centric vs. Protocol-Native

Both brokers support OpenWire, AMQP 1.0, MQTT 3.1/5.0, STOMP, and Apache Artemis’s native CORE protocol. The difference is in how those protocols are handled internally.

Apache ActiveMQ is OpenWire-centric: every inbound protocol is translated into OpenWire before reaching the broker’s routing logic. An AMQP 1.0 message is converted to OpenWire, routed, and potentially converted back to AMQP on delivery. This translation chain adds latency and can drop properties that do not map cleanly between protocols.

Apache Artemis handles all protocols natively against the internal address model. An AMQP message remains an AMQP message throughout its lifecycle on the broker, with no lossy protocol translation. For regulated industries or financial systems where message fidelity and property preservation are audit requirements, this distinction is architectural.

Apache Artemis’s native CORE protocol is the highest-performance wire protocol when both producer and consumer are JVM-based. For intra-datacenter service-to-service messaging where you control both sides of the connection, CORE provides measurably lower latency than OpenWire or AMQP.

5. High Availability: File Locks vs. Network Replication

Apache ActiveMQ offers two proven HA models: shared file system master/slave (file-lock-based, requiring a SAN or NFSv4) and JDBC master/slave (database-lock-based). Both are operationally simple, well-understood, and require no additional infrastructure beyond shared storage or a database, a major operational advantage for teams that value predictable, auditable HA behavior.

Apache Artemis supports shared store HA (conceptually equivalent to Apache ActiveMQ’s shared file system model) and network replication HA (no shared storage required). The replication model is more sophisticated, requiring quorum-based split-brain protection and backup warmup time, but it supports cloud-native environments without shared block storage.

For on-premises enterprise deployments where shared storage is already part of the infrastructure, Apache ActiveMQ’s HA model is battle-tested and operationally transparent. The Apache Artemis replication model’s advantages are most material in cloud and Kubernetes environments.

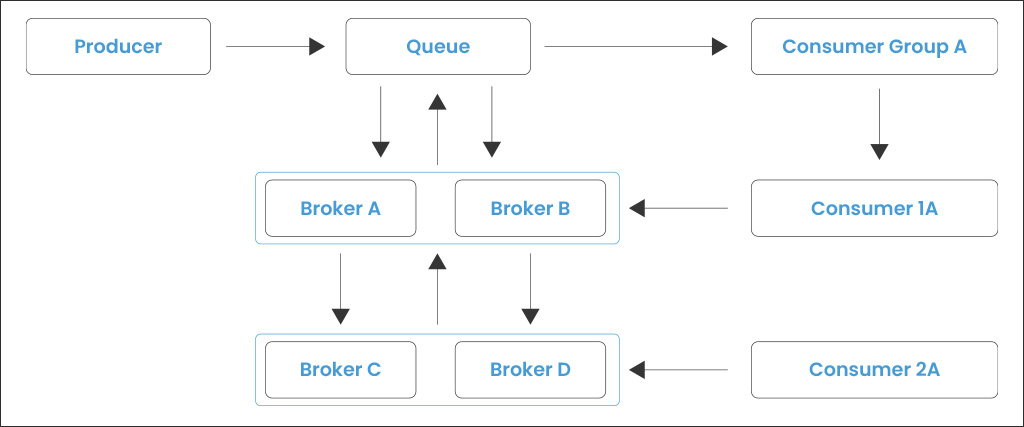

6. Clustering: Network of Brokers vs. Apache Artemis Cluster

Apache ActiveMQ uses the Network of Brokers (NoB) model for horizontal scale. Independent broker nodes are connected via network connectors and exchange messages using store-and-forward routing. Each broker is autonomous; the network topology must be carefully designed to avoid message cycling, TTL exhaustion, and infinite forwarding loops.

Apache Artemis uses a cluster model with built-in server-side message load balancing. Cluster connections are declared in broker.xml, and Apache Artemis redistributes messages automatically when consumer demand shifts. The model is more automatic but provides less explicit routing control than Apache ActiveMQ’s NoB.

For teams with deep Apache ActiveMQ NoB expertise, this is a meaningful operational model change, not just a configuration update. The loss of explicit routing control is a genuine tradeoff in environments where message routing behavior must be precisely auditable.

Performance: What the Evidence Actually Shows

Apache Artemis is architecturally better positioned for high-throughput scenarios at scale. The combination of non-blocking Netty I/O, the index-free journal, and direct-from-memory dispatch enables Apache Artemis to sustain higher message rates without the GC pressure and I/O contention that Apache ActiveMQ accumulates under extreme load.

But three nuances matter enormously for an honest evaluation, and they’re frequently omitted from comparisons written by parties with a stake in the Apache Artemis narrative:

At a low-to-moderate scale, Apache ActiveMQ can match or beat Apache Artemis.

When connection counts are low and message volumes are modest, Apache ActiveMQ’s simpler, more mature runtime achieves latency comparable to or lower than that of a freshly configured Apache Artemis instance.

Apache Artemis’s runtime footprint, Netty, the address-model indirection, and the larger JVM baseline incur overhead that is only offset by its scalability advantages at higher load. Enterprise workloads that are not pushing throughput ceilings have no reason to assume Apache Artemis is faster.

Protocol choice inside Apache Artemis has a significant performance impact.

Within Apache Artemis, AMQP and STOMP carry more serialization overhead than OpenWire or CORE. For internal JVM-to-broker traffic where you control both ends of the connection, CORE is the right protocol.

Defaulting to AMQP for intra-cluster traffic is a common Apache Artemis misconfiguration that introduces avoidable latency and one that erodes any performance advantage over Apache ActiveMQ in real-world deployments.

Configuration quality matters more than broker selection.

A poorly tuned Apache Artemis instance journal on a shared disk, paging misconfigured, thread pool undersized will underperform a well-tuned Apache ActiveMQ deployment.

The Apache Artemis performance tuning documentation explicitly recommends keeping the message journal on a dedicated physical volume. Sharing that volume with other I/O-heavy processes negates the append-only advantage entirely.

The bottom line on performance: Apache Artemis’s architectural advantages are real at scale. They are not universally decisive, and the premise that Apache Artemis is simply “faster than Apache ActiveMQ” without qualification is an oversimplification that serves a particular narrative more than it serves architects making deployment decisions.

Feature Gaps: What Apache Artemis Still Doesn’t Replicate from Apache ActiveMQ

The Apache ActiveMQ project is explicit: Apache Artemis is not intended to be a 100% reimplementation of every Apache ActiveMQ feature. Some Apache ActiveMQ capabilities do not make architectural sense in the Apache Artemis model and are not being ported. These are the gaps that surface most frequently in real-world migration assessments:

Advisory Messages

Apache ActiveMQ generates advisory messages on broker events, connections, destination creation, message expiry, and slow consumers on ActiveMQ.Advisory.* topics. Apache Artemis has a management notification system, but it is not a drop-in replacement for advisory listeners. Applications built on advisory consumption require architectural rework before Apache Artemis is viable.

Composite Destinations (Virtual Topics)

Apache ActiveMQ’s composite destination feature fans a single send out to multiple queues or topics. Apache Artemis handles this pattern differently through the address model, but the mapping is not one-to-one and requires deliberate reconfiguration.

Cursor-Based Destination Policies

Apache ActiveMQ’s memory management for queues is built around cursors, cached message lists filled from the store when memory allows. Destination policies written against cursor behavior (memory limits, store usage thresholds, prefetch sizes) do not translate directly to Apache Artemis’s paging model.

| Dimension | Apache ActiveMQ 5.x | Apache Artemis 2.x | Edge |

| I/O Architecture | Blocking TCP + optional NIO | Non-blocking Netty (always) | Artemis |

| Default Persistence | KahaDB (indexed journal) | Append-only journal (no index) | Artemis |

| High-throughput scale | Good | Better under scale | Artemis |

| Low-scale / low-latency | Competitive | Slight overhead at small scale | ActiveMQ |

| Protocol handling | OpenWire translation (all) | Native per protocol | Artemis |

| JMS compatibility | Native, first-class | Full via OpenWire layer | Tie |

| HA without shared storage | Not supported | Replication model | Artemis |

| Cloud / Kubernetes fit | Works, less natural | Better fit natively | Artemis |

| Advisory messages | Full support | No direct equivalent | ActiveMQ |

| Composite destinations | Supported | Requires reconfiguration | ActiveMQ |

| Configuration complexity | Lower | Higher | ActiveMQ |

| Community investment | Maintenance mode | Active development | Artemis |

| Operational maturity | Very high (20+ yrs) | High (10+ yrs) | ActiveMQ |

Wildcard Syntax Differences

Apache ActiveMQ’s OpenWire uses > for multi-level wildcards. Apache Artemis natively uses # (though the OpenWire compatibility layer handles the conversion for JMS clients). Custom routing logic using wildcards should be validated on Apache Artemis before any migration.

These are not minor inconveniences. For enterprises with deep Apache ActiveMQ operational investments in integration patterns, application code, monitoring tooling, and operational runbooks, these gaps represent real re-engineering costs that advocates of a “just migrate to Apache Artemis” position tend to underweight.

Head-to-Head Decision Matrix: Apache ActiveMQ vs Apache Artemis

When to Move to Apache Artemis

- You are starting a new deployment with no Apache ActiveMQ investment to protect

- You are deploying on Kubernetes or a cloud environment without shared block storage

- You need polyglot protocol support, AMQP producers alongside JMS or MQTT consumers without protocol translation overhead

- You need sustained throughput above 20,000-30,000 messages/second on a single broker

- You have a compliance or procurement requirement for software on an active commercial support track

- You are planning for a 3+ year operational horizon

When to Stay on Apache ActiveMQ (With a Migration Roadmap)

- Your existing Apache ActiveMQ deployment is stable, well-understood, and meeting its SLAs today

- You rely on advisory messages and cannot absorb the rearchitecting cost in your current planning window

- Your team has deep Apache ActiveMQ operational expertise and limited capacity for a parallel migration project

- Your message volumes are low-to-moderate, and Apache ActiveMQ is not a current bottleneck

- You have a significant investment in Apache ActiveMQ-based monitoring tooling, integration patterns, or runbooks

- Your workload profile does not require the connection density or throughput scale where Apache Artemis’s architectural advantages materialize

The keyword is planning, not urgency. Migration complexity only grows over time, and a structured roadmap beats a reactive cutover. But “should be on a roadmap” is not the same as “migrate immediately,” and treating it that way serves the migration consulting market more than it serves your organization.

What Migration from Apache ActiveMQ to Apache Artemis Actually Costs

Most migration content underestimates the effort by focusing only on client code changes. The full picture:

- Client code (usually low effort): In most cases, JMS clients using OpenWire connect to Apache Artemis without changes. Extensions like advisory listeners, composite destinations, and custom destination policies require rework.

- Configuration translation (moderate effort): Apache ActiveMQ uses activemq.xml. Apache Artemis uses broker.xml with a different schema. There is no automated translator. Transport connector configuration, persistence adapter settings, destination policies, and security configuration must all be manually re-expressed.

- Persistence migration (variable effort): A KahaDB data directory cannot be mounted in Apache Artemis. For migrations where in-flight messages can be drained before cutover, the problem is manageable. For live cutovers with persistent backlogs, wire-based migration between a running Apache ActiveMQ and Apache Artemis instance requires careful planning.

- HA topology redesign (moderate-to-high effort): If your Apache ActiveMQ deployment uses shared file system HA, Apache Artemis shared store HA is conceptually similar. Apache Artemis replication means building a new HA configuration from scratch.

- Monitoring and operations tooling (often the biggest surprise): Apache ActiveMQ and Apache Artemis have different JMX MBean trees, different metric names, and different management APIs. Dashboards, alerting rules, and runbook scripts built against Apache ActiveMQ need to be rebuilt for Apache Artemis. This is consistently the most underestimated workload in migration projects.

The Bottom Line on Apache ActiveMQ vs Apache Artemis in 2026

Apache Artemis is a capable, well-engineered broker with genuine advantages in high-throughput, cloud-native, and polyglot protocol environments. For greenfield deployments in those contexts, it is often the right choice.

But the narrative that Apache Artemis is simply superior, that Apache ActiveMQ is legacy infrastructure to be urgently replaced, does not survive honest scrutiny. It reflects a vendor-driven framing, not a universal technical truth.

Apache ActiveMQ continues to power mission-critical workloads at major enterprises, backed by deep operational maturity and a broad community of expertise that was not built by a single vendor.

The right choice depends on your workload, your environment, your team’s expertise, and your operational investment, not on which broker generates the most migration consulting revenue.

meshIQ supports enterprises running both Apache ActiveMQ and Apache Artemis, with deep expertise in Apache ActiveMQ-based deployments and the architecture depth to help you evaluate migration on your terms, not someone else’s timeline.

Start with a conversation about your ActiveMQ environment → Get Enterprise Support.

Frequently Asked Questions

Apache ActiveMQ (5.x) is the original JMS-centric broker built around KahaDB persistence and a configurable blocking/NIO I/O layer – a mature, production-proven system with over two decades of real-world deployments. Apache Artemis is architecturally distinct, originating from Red Hat’s HornetQ project, donated to Apache in 2014, and built on non-blocking Netty I/O, an index-free append-only journal, and a protocol-agnostic address model. They share a project umbrella but are fundamentally different systems with different strengths.

At enterprise scale and high connection density, Apache Artemis’s non-blocking Netty I/O and index-free journal deliver higher sustained throughput. At low-to-moderate scale with stable connection counts, Apache ActiveMQ can match or slightly outperform Apache Artemis due to Apache Artemis’s larger runtime footprint. The blanket claim that “Apache Artemis is faster” is an oversimplification; configuration quality and workload profile matter more than broker selection for most deployments.

In most cases, yes. Apache Artemis includes an OpenWire compatibility layer that allows JMS clients written for Apache ActiveMQ to connect without modification. Applications using Apache ActiveMQ-specific extensions, advisory message listeners, composite destinations, and cursor-based destination policies require rework before migrating to Apache Artemis.

Apache Artemis implements only queues internally, routing messages to them via addresses with routing types. Anycast routing implements point-to-point semantics; multicast routing implements publish/subscribe. A single address can support both simultaneously, something Apache ActiveMQ’s separate queue/topic model cannot do. This matters most for polyglot environments where producers and consumers use different protocols against the same broker. For JMS-only deployments, the OpenWire compatibility layer handles the mapping transparently.

The Apache Artemis console is the broker’s built-in Hawtio-based web management UI. It allows operators to browse addresses and queues, inspect messages, monitor journal and memory usage, and run management operations in real time. Teams migrating from Apache ActiveMQ will find it more powerful in some respects, but will need to rebuild monitoring queries and runbooks to match Apache Artemis’s different MBean structure and address model, a workload that is consistently underestimated in migration planning.

Artemis is an Apache Software Foundation project, but its contributor base is heavily concentrated: approximately 90% of active contributors are Red Hat employees. This means Red Hat has significant influence over Apache Artemis’s roadmap and the industry narrative around it. Architects evaluating Apache ActiveMQ vs Apache Artemis should factor this vendor dynamic into how they weigh comparative claims, particularly claims about Apache ActiveMQ’s obsolescence or Apache Artemis’s universal superiority.